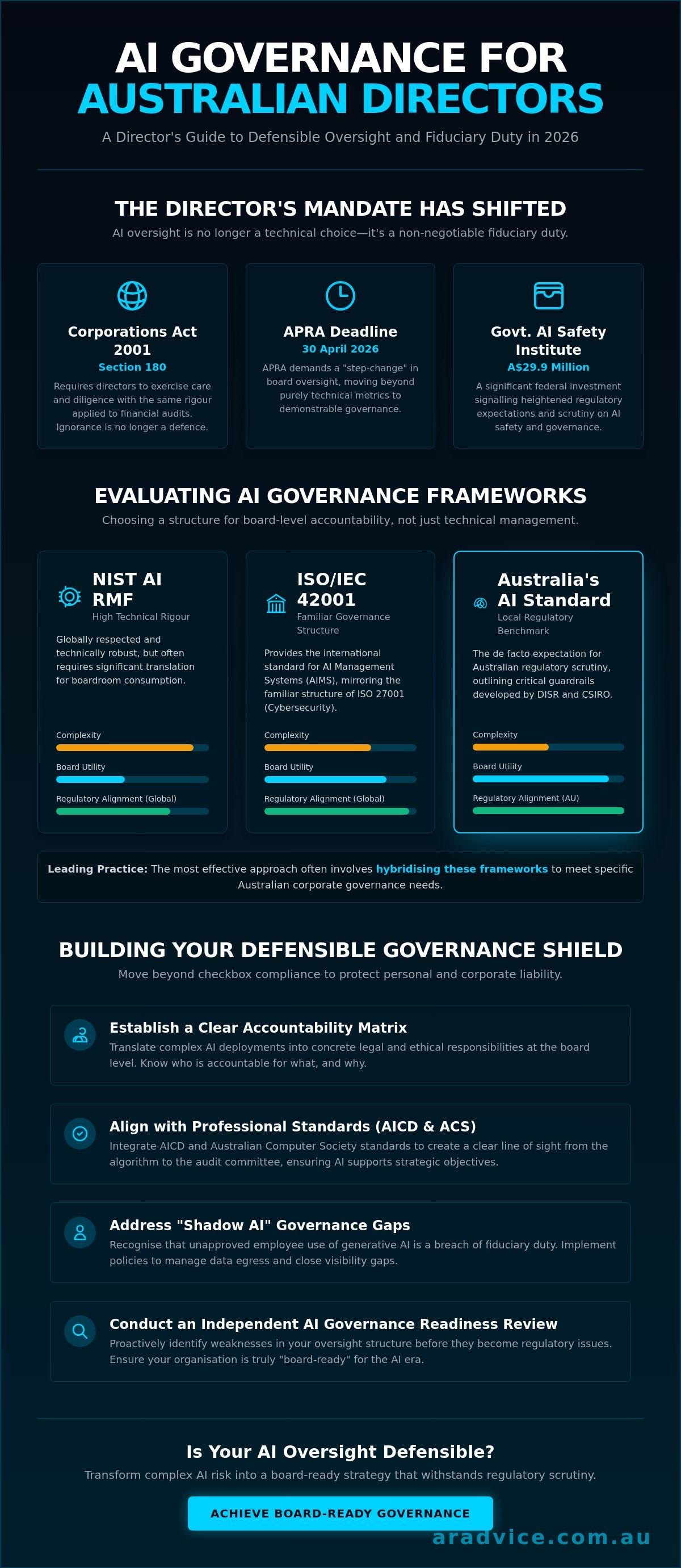

If you cannot explain how your company’s latest AI implementation aligns with your risk appetite, you aren't just facing a technical glitch; you are courting a breach of fiduciary duty. On 30 April 2026, APRA demanded a "step-change" in oversight, making it clear that technical metrics are no longer sufficient for board-level accountability. You likely feel the weight of the Corporations Act and the growing visibility gap created by shadow AI. Adopting a robust AI risk management framework for directors is no longer optional for those seeking to meet AICD and ACS standards.

We understand that distinguishing between material business risk and AI hype is a constant challenge in the boardroom. This guide promises to transform complex data into a defensible 2026 oversight strategy that withstands regulatory scrutiny. We will examine the essential guardrails of the National AI Plan and provide a structured pathway to achieve board-ready reporting that prioritises legal accountability over IT jargon. By the end of this briefing, you'll have the tools to ensure your governance matches the A$29.9 million investment the Australian government is placing into the new AI Safety Institute.

Key Takeaways

- Understand why Section 180 of the Corporations Act 2001 (Cth) transforms AI oversight from a technical choice into a non-negotiable fiduciary duty for Australian directors.

- Learn to distinguish between high-rigour technical standards like NIST and governance-centric models like ISO/IEC 42001 to select the most effective AI risk management framework for directors.

- Discover how to establish a clear Accountability Matrix that translates complex AI deployments into concrete legal and ethical responsibility at the board level.

- Identify the critical steps for conducting an independent AI Governance Readiness Review to ensure your organisation meets the professional standards set by the AICD and ACS.

- Build a defensible governance shield that moves beyond checkbox compliance to protect both personal and corporate liability against increasing regulatory scrutiny.

The Director’s Mandate: Why AI Risk is a Fiduciary Duty in 2026

Governance isn't a technical hurdle; it's a legal shield. In 2026, an AI risk management framework for directors serves as the primary defence against personal and corporate liability. If an AI system generates biased outcomes or leaks sensitive intellectual property, the regulator won't ask your CTO about the code. They'll ask the board about their oversight. Section 180 of the Corporations Act 2001 (Cth) makes this oversight a non-negotiable priority. It requires directors to exercise care and diligence with the same rigour applied to financial audits. In the current climate, ignorance of AI's "black box" logic is no longer a valid defence.

You must move beyond the technical "Is it safe?" and ask the Director’s Question: "Is our oversight defensible?" This shift requires a deep understanding of AI safety principles to ensure that alignment and robustness are monitored at the governance level. Technical risk is a system failure. Governance risk is a failure of accountability. While IT reports on system uptime and data throughput, we reveal the hidden gaps in legal and ethical readiness that threaten your standing as a director.

Aligning with AICD and ACS Standards

The AICD’s 2024-2026 guidelines emphasise that boards must be "AI-ready" through structured education and proactive risk appetite setting. Integrating ACS professional standards into your AI risk management framework for directors creates a clear line of sight from the algorithm to the audit committee. This structure ensures that every AI deployment remains within the bounds of your strategic objectives and professional obligations. It transforms abstract technology into a concrete accountability matrix that the board can actually manage.

The High Stakes of Generative AI Oversight

Shadow AI represents a hidden breach of fiduciary duty. When employees use unapproved generative tools, they create unmanaged data egress that boards often fail to detect. This lack of visibility isn't just an IT issue; it's a governance failure. To protect the organisation, directors must look at Cyber Governance for Boards Australia to understand how to bridge the gap between technical reporting and real-world liability. Without this visibility, you're flying blind into a storm of regulatory scrutiny.

Evaluating AI Frameworks: Choosing a Structure for Your Board

Selecting an AI risk management framework for directors is not a technical procurement exercise. It is a strategic decision that determines how your board will discharge its duty of care. While technical teams often gravitate toward complexity, directors require clarity and defensibility. The goal is to move from a "black box" of technical metrics to a transparent structure that supports informed decision-making. You must choose a framework that doesn't just manage code, but manages accountability.

The NIST AI Risk Management Framework offers high technical rigour. It is widely respected globally but often requires significant translation for the boardroom. It focuses heavily on technical robustness, which is necessary but insufficient for governance. Conversely, ISO/IEC 42001 provides the international standard for AI Management Systems (AIMS). It mirrors the structure of ISO 27001, making it familiar to boards already overseeing cybersecurity. However, the most critical benchmark for local operations is Australia’s Voluntary AI Safety Standard. This framework, developed by DISR and CSIRO, outlines 10 guardrails that are becoming the de facto expectation for Australian regulatory scrutiny.

NIST vs. ISO vs. Australian Standards

No single framework is a silver bullet. Leading boards often hybridise these structures to meet specific Australian corporate governance needs. The following table highlights the trade-offs in complexity and utility:

| Framework | Complexity | Board Utility | Regulatory Alignment |

|---|---|---|---|

| NIST AI RMF | High | Moderate | Global / US |

| ISO/IEC 42001 | Moderate | High | International |

| AU Voluntary Standard | Moderate | High | Australia (ASIC/APRA) |

Bridging the Governance Gap

Technical implementation is only half the battle. Effective tech consulting for boards must focus on oversight rather than just the deployment of tools. When a framework is too technical, it creates a "governance gap" where the board loses visibility into material risk. You must move beyond checkbox compliance to strategic resilience. This involves filtering framework outputs through a "Board-Ready" lens. Does this report tell me if we are meeting our fiduciary duties? If not, the framework is failing you. To ensure your current approach is actually defensible, consider an independent AI Governance Readiness Review to identify hidden vulnerabilities before they become liabilities.

Implementing Defensible Oversight: The AI Governance Readiness Review

Transitioning from a theoretical understanding to active governance requires a structured execution plan. A robust AI risk management framework for directors is only as effective as its implementation. It begins with a clear Accountability Matrix for every AI deployment. This document must explicitly map technical outputs to executive owners, ensuring that liability is never diluted by technical complexity. When an AI system fails, the board must already know exactly which individual is responsible for the oversight of that specific use case.

The most critical step in this process is conducting an independent AI Governance Readiness Review. This review provides the objective verification required to bridge the gap between what IT reports and what the board actually reveals. Beyond the review, reporting metrics must be redefined. You must demand "Board-Ready" data that bypasses technical jargon like "latency" or "epochs" in favour of "compliance drift" and "material risk exposure." Finally, your board must engage in facilitated incident simulations. These high-stakes exercises test your collective response to AI-driven crises before they escalate into public regulatory failures.

The Role of Independent Advisory

Internal IT teams cannot provide the independent verification required for truly defensible oversight. They are often too close to the implementation to recognise systemic governance gaps or "optimism bias" in their reporting. A "No Conflicts of Interest" advisor serves as a rare ally in a market crowded with vendors. By remaining independent from technical implementation, we provide the intellectual rigour and transparency necessary to expose uncomfortable truths about checkbox compliance. This independence is what creates a foundation of pure trust between the board and its advisors.

Reputational Protection for Directors

A structured framework is your primary asset during a regulatory inquiry. With the first mandatory AI requirements for non-corporate Commonwealth entities starting on 15 June 2026, the pace of regulation is accelerating. Having a documented, audited oversight process is the difference between a minor correction and a major penalty under the Corporations Act. This structure protects your professional standing by proving you exercised due care and diligence. For deeper insights into managing these high-level strategic responsibilities, we provide specialised guidance for directors seeking to fortify their governance posture.

Fortifying Your Boardroom against the Governance Gap

The shift from technical AI metrics to board-level accountability is the defining governance challenge of 2026. You now recognise that oversight is a non-delegable fiduciary duty under Section 180 of the Corporations Act. Relying solely on internal technical reporting leaves a visibility gap that regulators like APRA and ASIC will not overlook. Implementing a robust AI risk management framework for directors ensures you move beyond checkbox compliance to a position of defensible readiness.

Effective governance requires independent, conflict-free verification that aligns with AICD and ACS standards. Our exclusive 48-hour readiness review methodology provides the intellectual rigour needed to bridge the gap between technical teams and the board. This process identifies critical vulnerabilities and delivers actionable insights within a timeframe that respects the pace of executive leadership. It's about ensuring your oversight stands up to the highest levels of regulatory scrutiny.

Don't leave your reputation to chance. Secure your board with an Independent AI Governance Readiness Review today. You can navigate the complexities of AI with confidence and strategic clarity.

Frequently Asked Questions

What is the most important AI risk for an Australian director to manage?

The most critical risk is the governance gap where the board lacks visibility into material AI use cases. Technical failures are secondary to the risk of failing to demonstrate defensible oversight. Without a structured accountability matrix, directors remain exposed to personal liability if shadow AI leads to a breach of privacy or intellectual property rights. You must ensure that your visibility matches your legal responsibility.

How does the Corporations Act 2001 apply to AI failures?

Section 180 of the Corporations Act 2001 (Cth) applies by holding directors personally accountable for failures in due care and diligence. If an AI system causes material harm, the legal focus is on whether the board took reasonable steps to understand the risk. The 15 June 2026 mandatory requirements for Commonwealth entities represent the minimum standard of care that Australian regulators now expect from all directors.

Can a board delegate AI risk management to the IT department?

Oversight of AI is a non-delegable fiduciary duty that the board must retain. While IT manages technical execution, the board must implement an AI risk management framework for directors to ensure independent verification of management's claims. Relying solely on technical teams creates a conflict of interest that undermines your defensibility. Governance requires a clear separation between those who implement the technology and those who oversee the risk.

How often should a board review its AI governance framework?

Boards should review their governance frameworks at least annually; however, the 30 April 2026 APRA mandate suggests a more frequent cadence for high-stakes industries. Quarterly reviews are becoming the benchmark for maintaining a defensible position in a rapidly evolving 2026 regulatory environment. These reviews must include updated risk assessments and incident simulations to ensure the organisation's resilience matches the current pace of technological escalation.